31 Mar 2026

The Car Wash Test for LLMs

People are more than happy to "prove" that AI is stupid

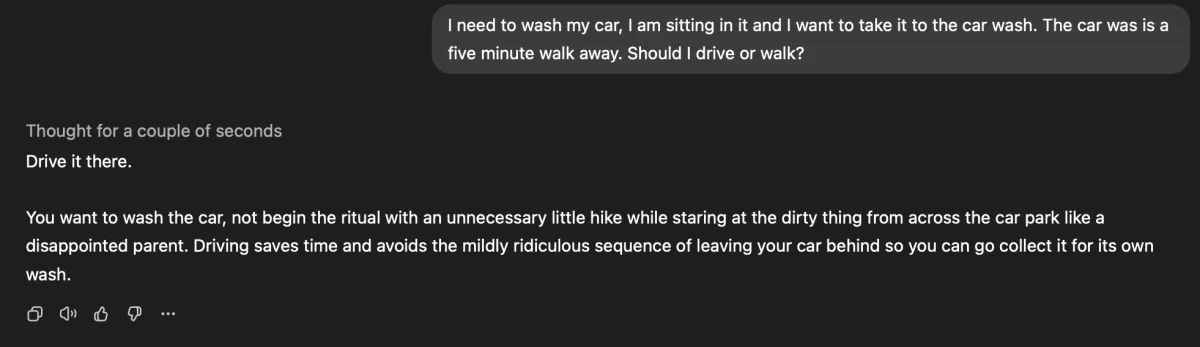

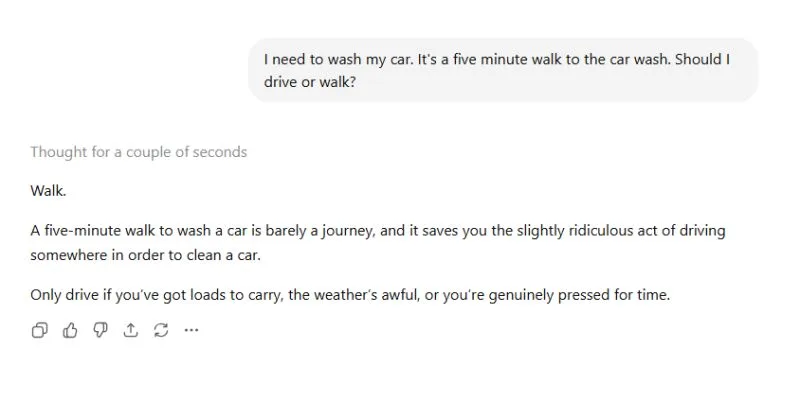

In 2024, early adopters of ChatGPT were asking, "How many Rs in strawberry?" Now we're on to, "Do you walk or take your car to the car wash?"

These posts keep going viral because the failures feel so human. But they're not proof that AI is broken. They're proof that most people don't yet understand what they're actually talking to.

There's a term for it: a stochastic parrot. It's a metaphor describing LLMs as systems that statistically mirror text patterns from training data without understanding the meaning behind them. Think of it this way: the parrot has read every book in the library but has never actually stepped outside to see what a "car" or "walking" actually involves.

That disconnect between computational power and common sense is exactly where these viral moments come from.

Why the car wash question breaks it

Humans carry around a mental model of the physical world. We know that objects occupy space, that a car needs to be physically at the car wash to get cleaned, and that the question isn't really about walking vs driving. It's about logistics.

An LLM doesn't have that world model. To the AI, "car wash" is a destination label, like "the park" or "the library." It understands the sentence's grammar perfectly, but it doesn't understand its purpose.

Also, pattern matching is probabilistic. If the most statistically likely response to a question about a five-minute distance is "walk," the model will say it with total confidence, even when the premise makes no sense.

Is it "intelligence" or just "calculated guessing"?

Most models people interact with today are "System 1" thinkers: fast, intuitive, and prone to gut-feel mistakes. The industry is already shipping models with "System 2" capabilities, using chain-of-thought reasoning to double-check their own logic before responding. But this isn't the default experience for most users yet, and the gap between what's possible and what people encounter day-to-day is still wide.

These "gotcha" moments aren't just entertaining. They're one of the clearest reasons we haven't handed over the keys to fully autonomous systems. If an AI can't figure out how to get a car to a wash, it's hard to trust it with anything involving real-world logistics.

The 2026 irony

We're spending billions training models on hundreds of thousands of GPUs to teach a machine something a four-year-old understands intuitively: you can't wash the car if it's still in the driveway.

Prompt it properly on a "thinking" model and get a different result, maybe even with a snarky comment.